Faster import of data into MySQL

Importing data from a .csv file into a MySQL database using Workbench’s data import wizard is notoriously slow. My recent import of a (500000, 6) shaped table into MySQL using the wizard took over an hour’s time.1

One more reason for more people to prefer loading and analysing data using Pandas.

Objective

To load data from a local .csv file into MySQL through LOAD DATA INFILE.

Prerequisites

- MySQL installed - I did it through

homebrew. - MySQL Workbench (Optional) - SQL Client & Visual tool for databases.

Create table to import data

Firstly, we need a table in MySQL for the data to be imported into. If the table already exists, all fine. Else, we need to create a new table in MySQL either through the command line or through MySQL Workbench.

My shortcut 👉 Use Workbench’s data import wizard to start the process of importing the

.csvfile, cancel the process right after and purge the imported rows. This leaves us with a nice parametrised empty table to fast import later.

To do this,

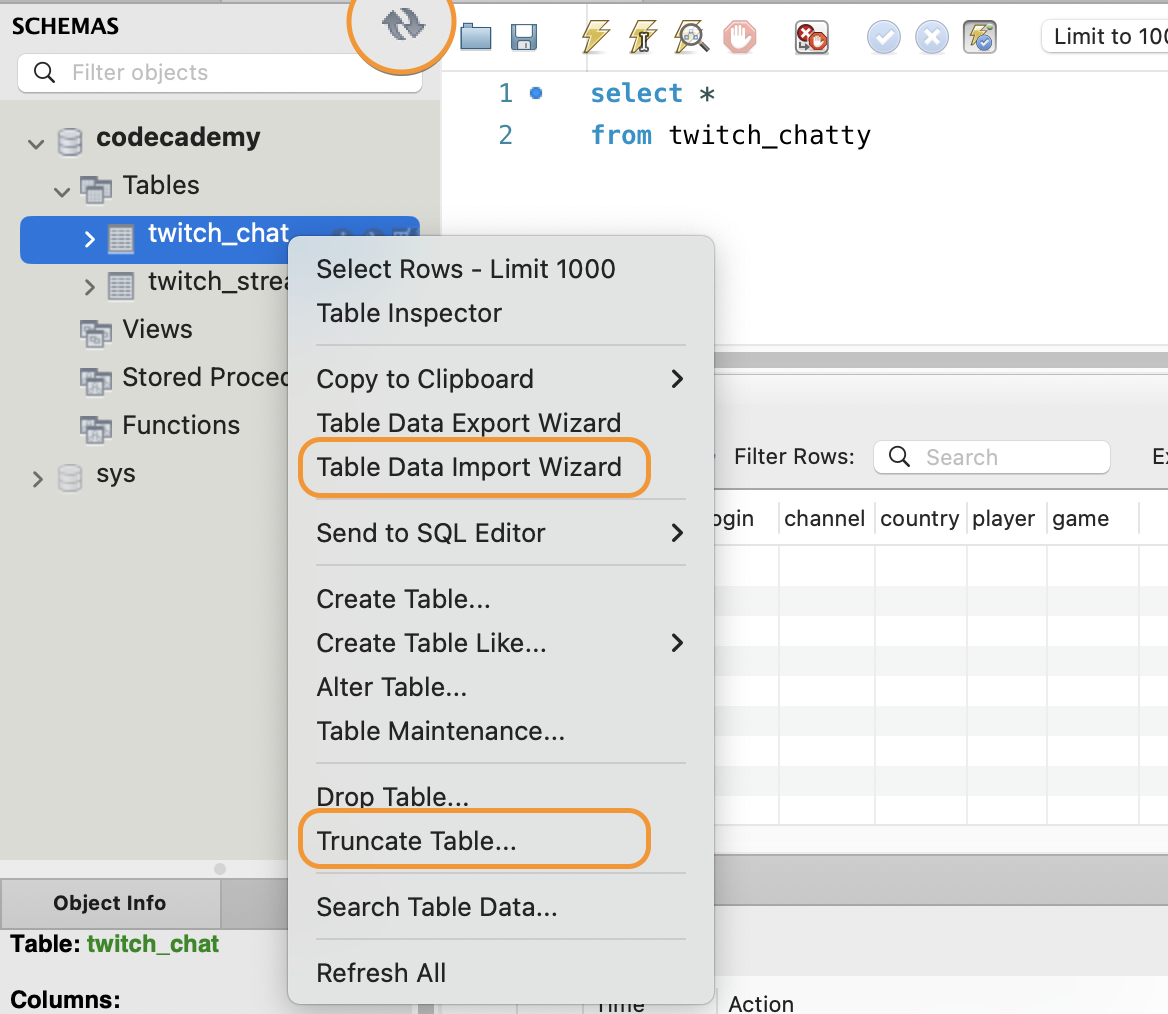

- In MySQL Workbench, right-click on the database (schema) into which you want the table added.

- Click on

Table Data Import Wizard - The wizard will take you through the steps to import data.

- In the final page of the wizard, you’ll be able to see the log for the import. Once the log is active, and you see multiple lines of

- Data import, we’re good to go. - Cancel the process and refresh the schemas using the 🔄 button. You should see the new table appear.

- Right-click on the table and hit

Truncate Table.

We now have a proper empty table for data imports!

LOAD DATA INFILE

1

2

3

4

5

6

7

-- In SQL Workbench or command line (mysql> ...).

load data local infile '~/path/to/file.csv'

into table database_table

fields terminated by ','

enclosed by '"'

lines terminated by '\n'

ignore 1 rows;

Errors

file request rejected/ loading local data disabled

If the

local_infileoption is not enabled in both the client and server sides, we’ll encounter an ERROR 2068 or 3950 with an import fail.

This variable controls server-side LOCAL capability for LOAD DATA statements.

In our case the client (Workbench) and the server (MySQL Server) coexists on the same machine. So we can edit the existing MySQL connection in Workbench to allow LOCAL capability.2

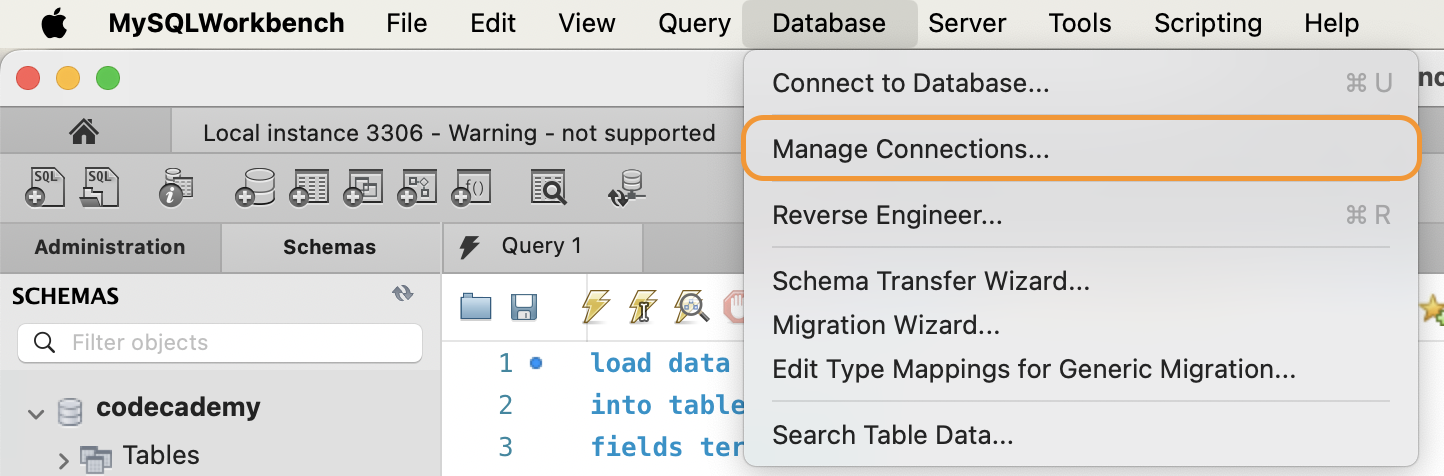

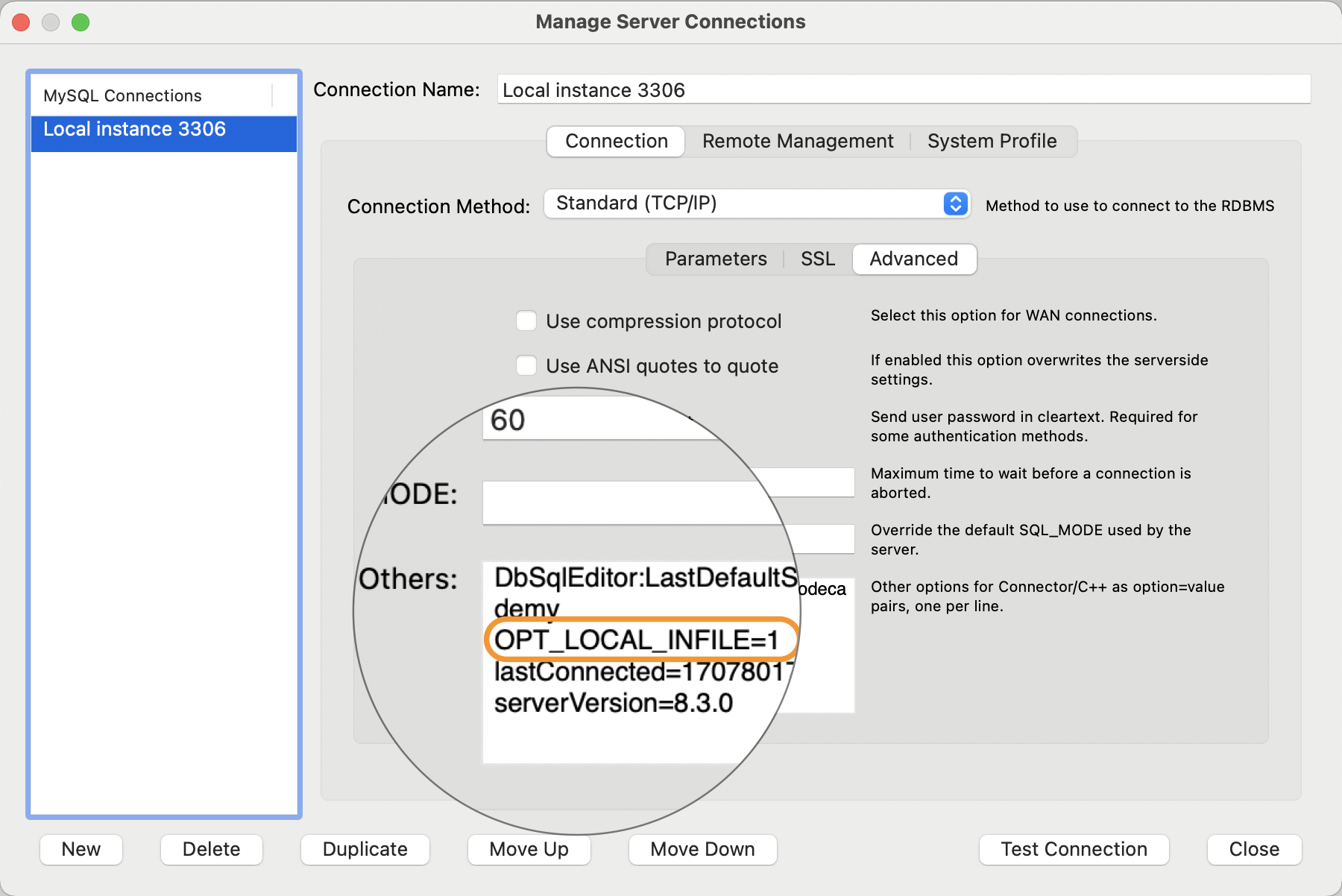

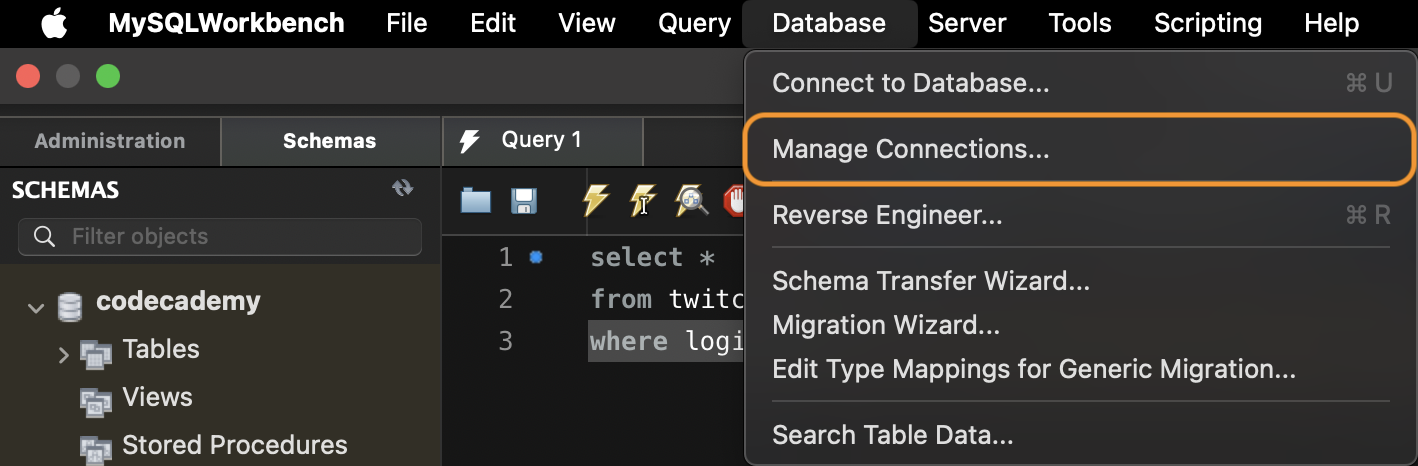

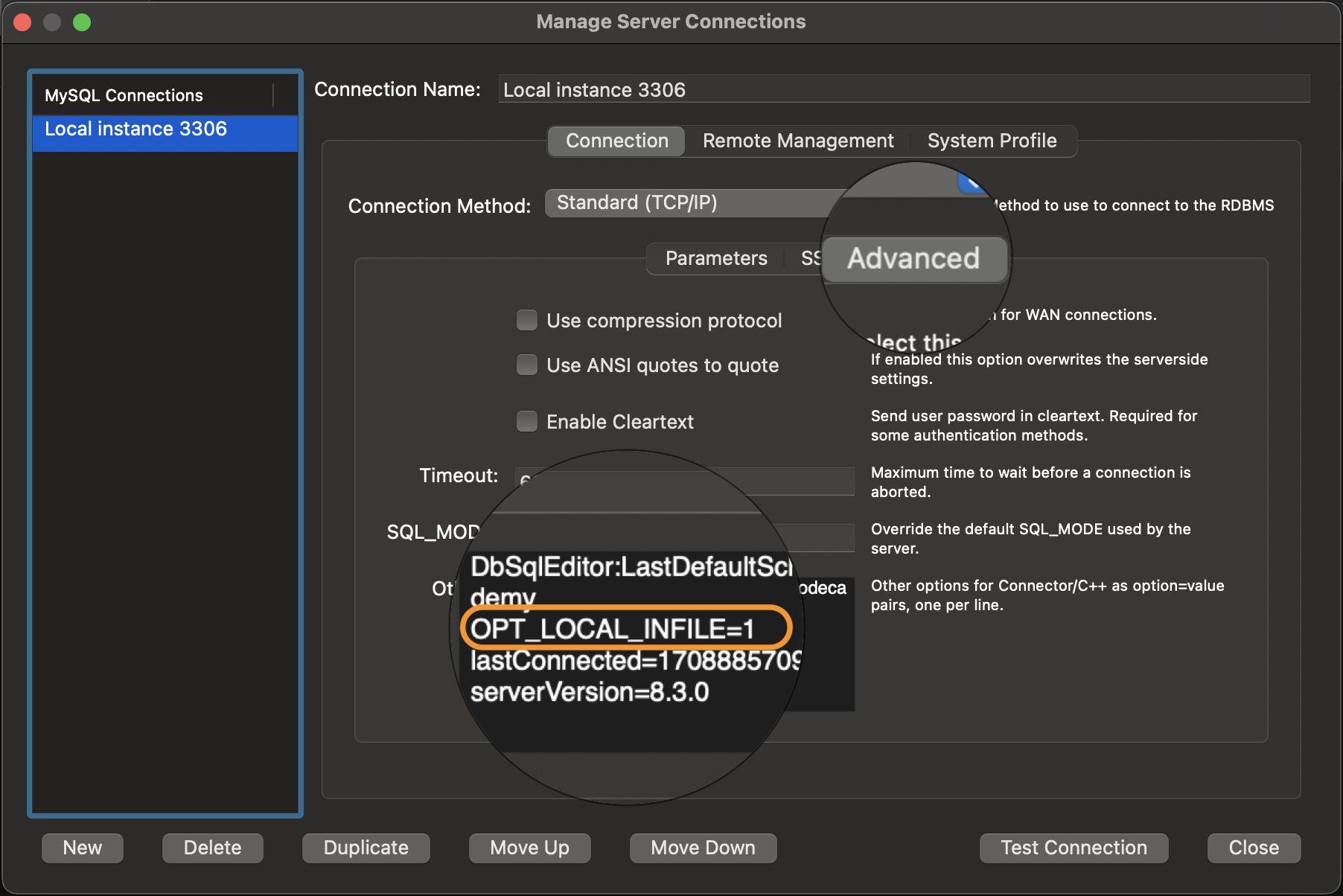

- In Workbench, go to Tools -> Database -> Manage Connections.

- Right-click the connection and click ‘Edit connection’.

- Select ‘Advanced’ option. Paste

OPT_LOCAL_INFILE=1in the ‘Others’ box. - Click ‘Test Connection’. It will successfully update the connection.

- Click close and restart Workbench.

You can also do this through the command line/ terminal.3

- Exit from

mysql. - Use command

mysql --local-infile=1 -u root -pto connect to mysql. USE databaseLOAD DATA INFILE ...;

Unhandled exception: ‘ascii’ codec can’t decode byte 0xef in position 0

This usually occurs when your

.csvfile is the result of an export from another application such as Google Sheets or Tableau Prep.

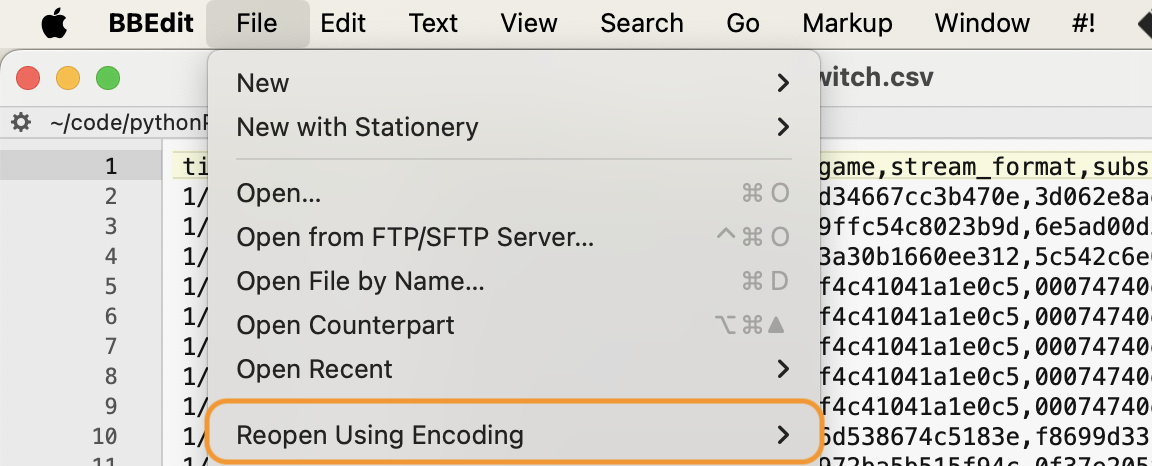

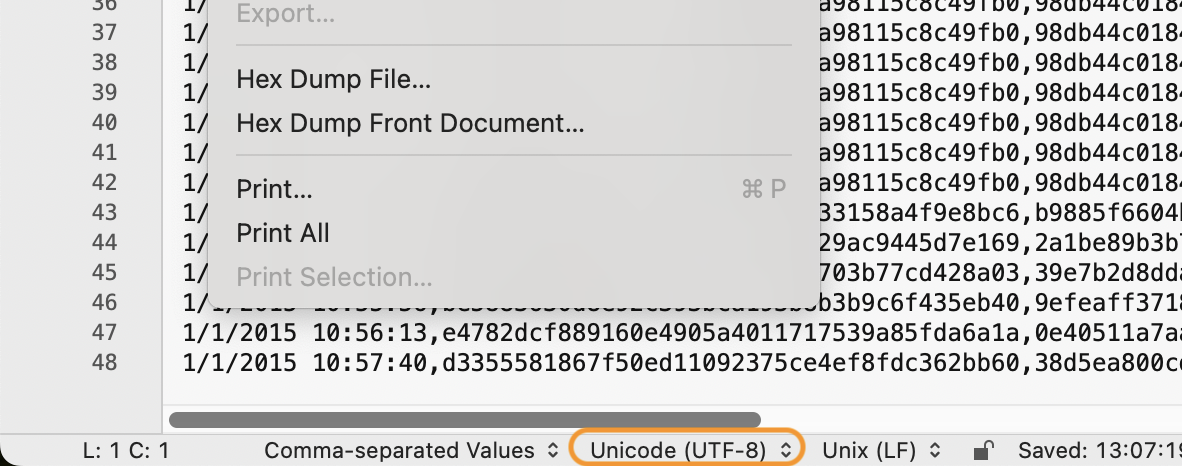

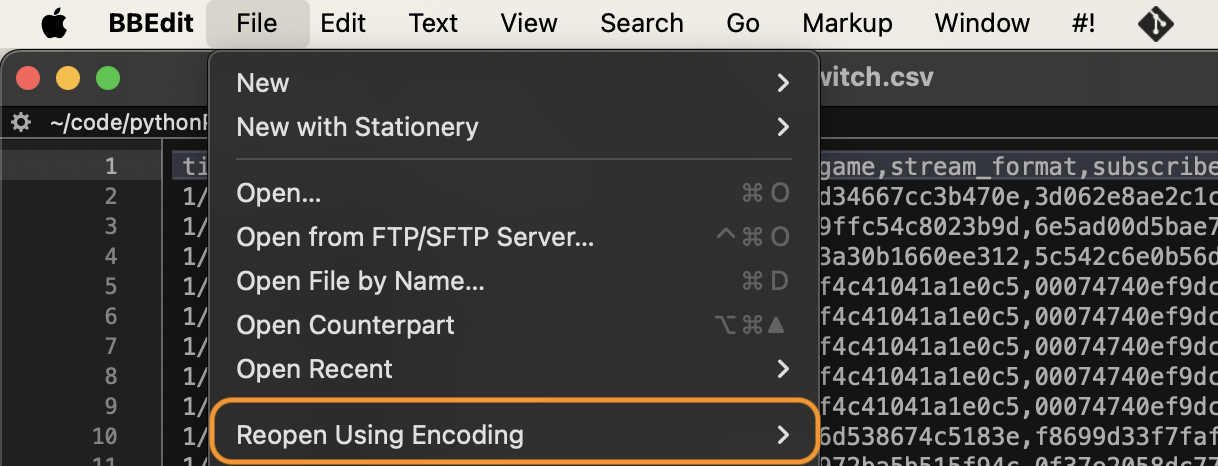

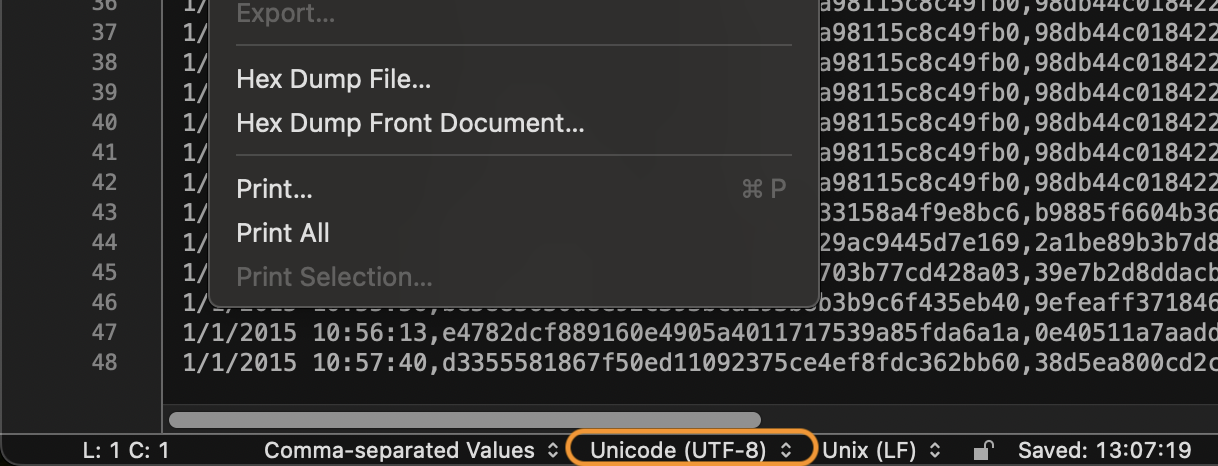

Force convert the file to utf-8 or ASCII to forego the problem. Most modern text editors can do this.4

I use BBEdit, which has a nice Document Text Encoding option on the bottom and a Reopen Using Encoding option under Files. Convert the encoding using either option and save the file anew.

This can be imported into MySQL Workbench without issues.

Final Thoughts

And voilà, this method of loading data to a table is superfast in mysql. With very large sets, we might want to break up this LOAD DATA INFILE import into manageable, reasonably sized chunks.5

We can also accomplish the above using mysqlimport. It’s a command line interface for MySQL’s LOAD DATA INFILE statement.6.

The wiki on how to use mysqlimport will be refined and updated at a later date after I’ve gained practical experience with it.

Thanks for reading! Hope this helps.

Resources

Error code 2068: file requested rejected due to restrictions on access with root user. ↩

ERROR: Loading local data is disabled - this must be enabled on both the client and server sides. ↩

MySQL Error: Unhandled exception: ‘ascii’ codec can’t decode byte 0xef in position 0: ordinal not in range(128) ↩